What drove support calls

- Scenario-based policy clarification

- Questions about what happens next in a process

- Uncertainty about how to apply the rule correctly

- Status and process guidance in edge situations

Responsible AI

Designing a broker-first AI experience that prioritised confidence over novelty.

After the CommBroker redesign, brokers could find information more easily, but they still needed help interpreting policy in specific scenarios. I led design on CommBank's first broker-facing Gen AI pilot, shaping an assistant that answered scenario-based questions with guardrails, attribution, and measurable business value, while helping define the trust model, source strategy, and evaluation criteria for the pilot.

The assistant was designed as a guidance layer inside the broker workflow, not a flashy standalone chatbot.

Challenge

The CommBroker redesign improved discoverability, but brokers still called support when they needed help interpreting policy in their specific scenario. The remaining problem was not where content lived. It was whether the interface could help translate policy into usable next-step guidance.

Context

This pilot did not begin with a blank page. It built on the JTBD research, call-driver analysis, and content cleanup that came out of the CommBroker redesign. That sequencing mattered. A Gen AI assistant grounded in inconsistent or legacy content would have amplified uncertainty instead of reducing it.

Mapped the difference between finding policy and applying policy to a live scenario.

Focused the pilot on question types that genuinely drove operational cost and broker frustration.

Ensured the assistant had cleaner, approved source material to draw from.

The AI needed to act as a guidance layer, not a shinier version of search.

Principles

In a regulated environment, a confident but incorrect answer is worse than a slower, more careful one. I designed the pilot around strict product principles that prioritised trust, attribution, and operational value over anything that merely felt novel.

Design

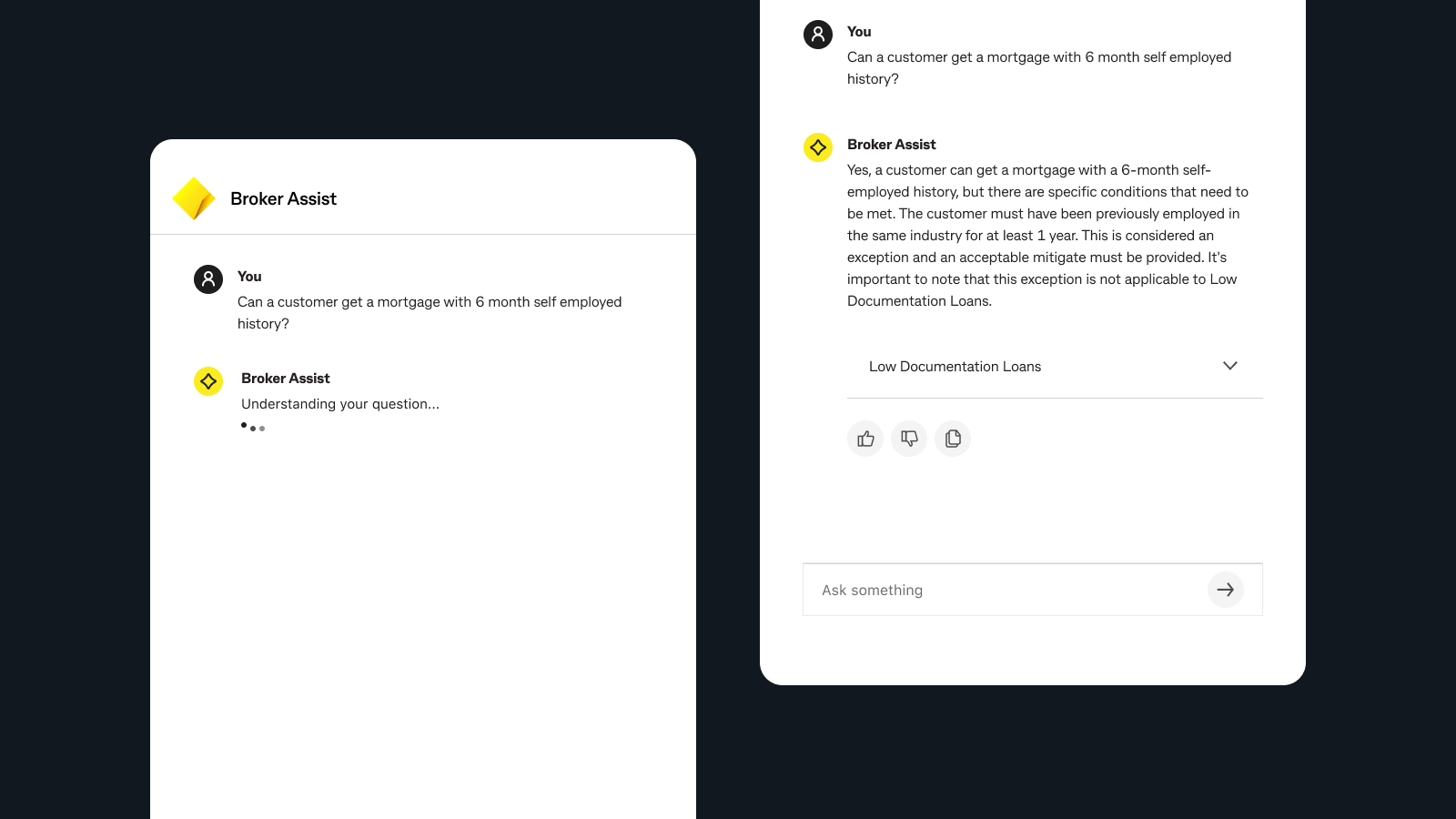

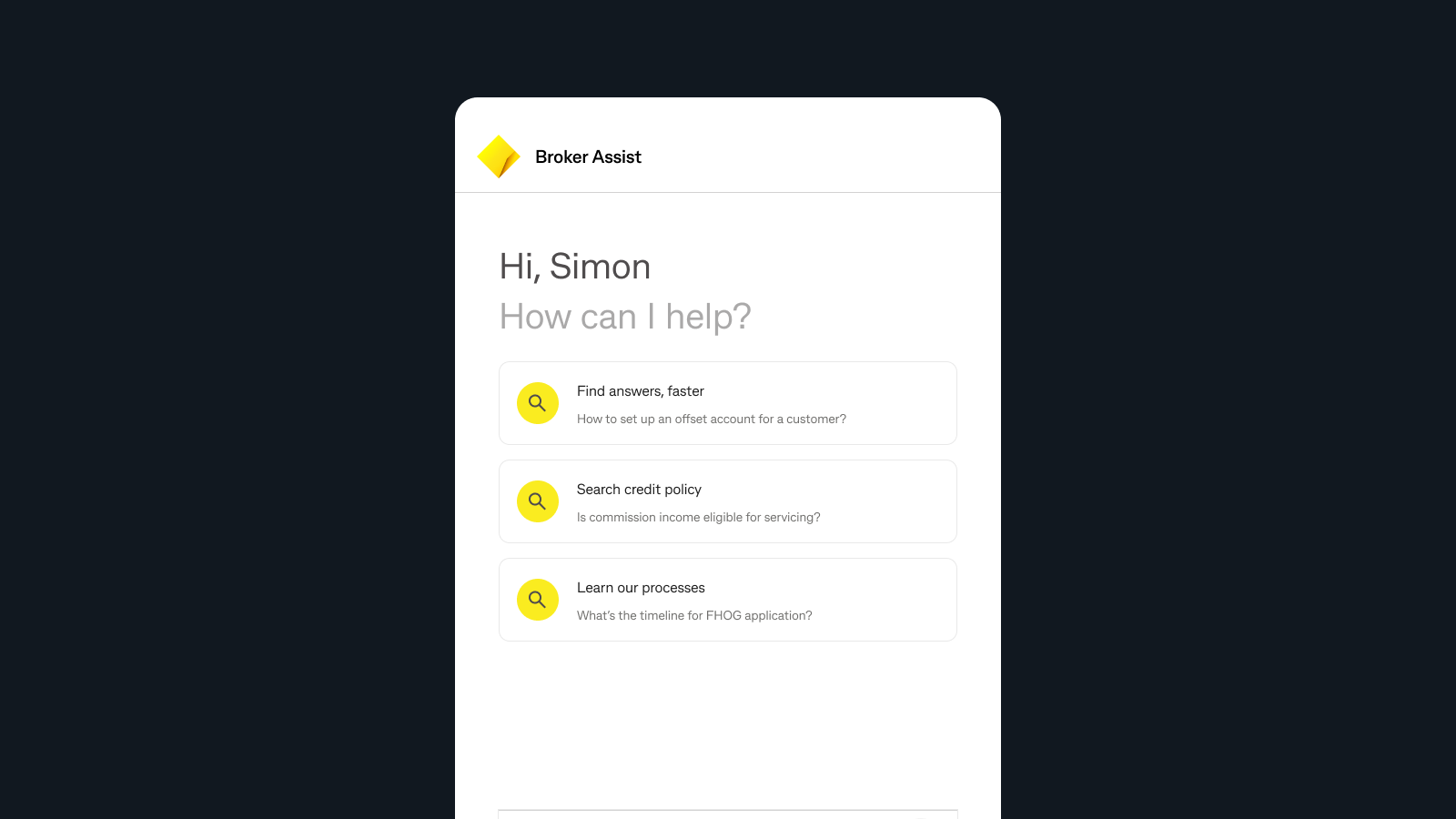

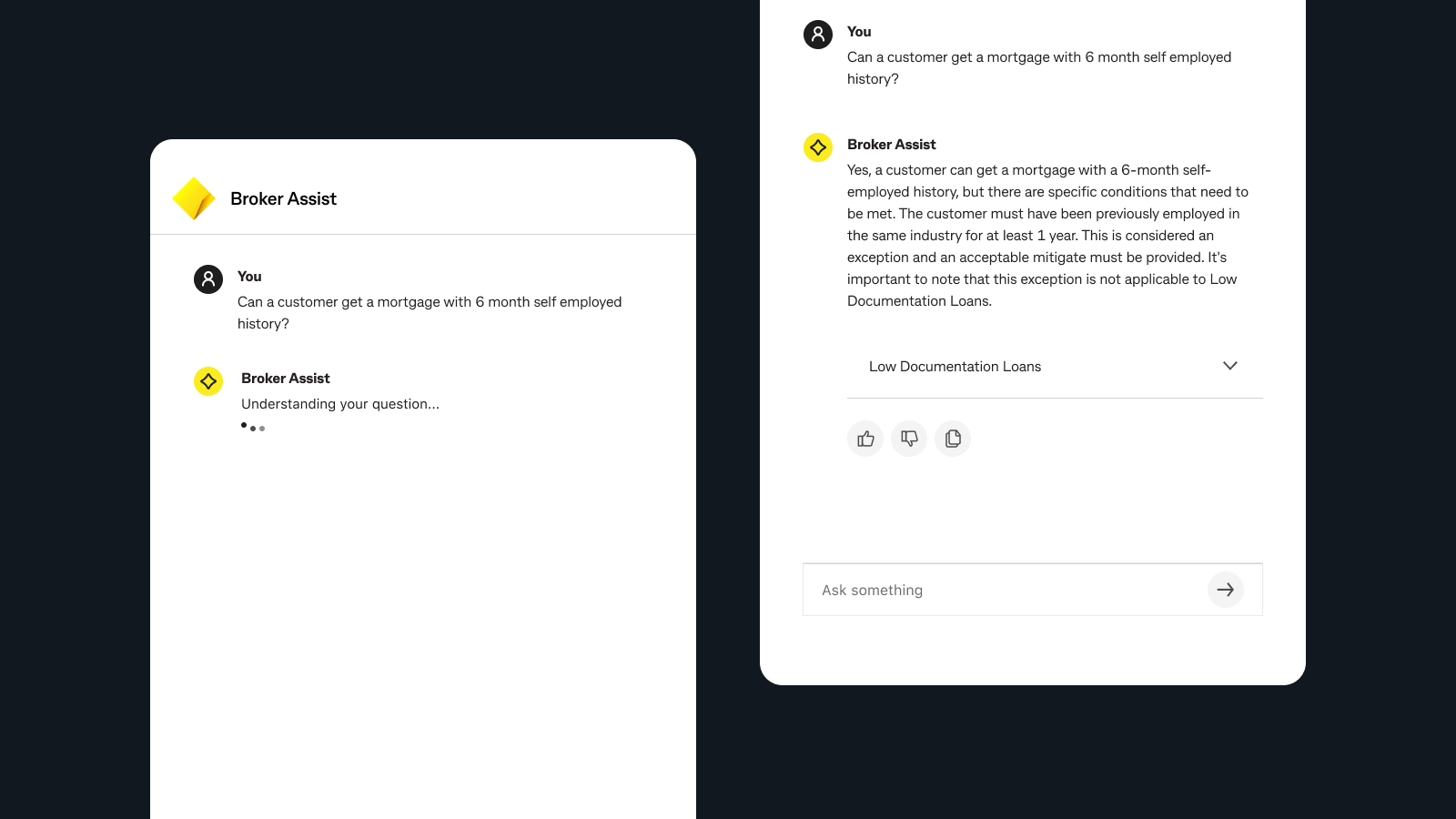

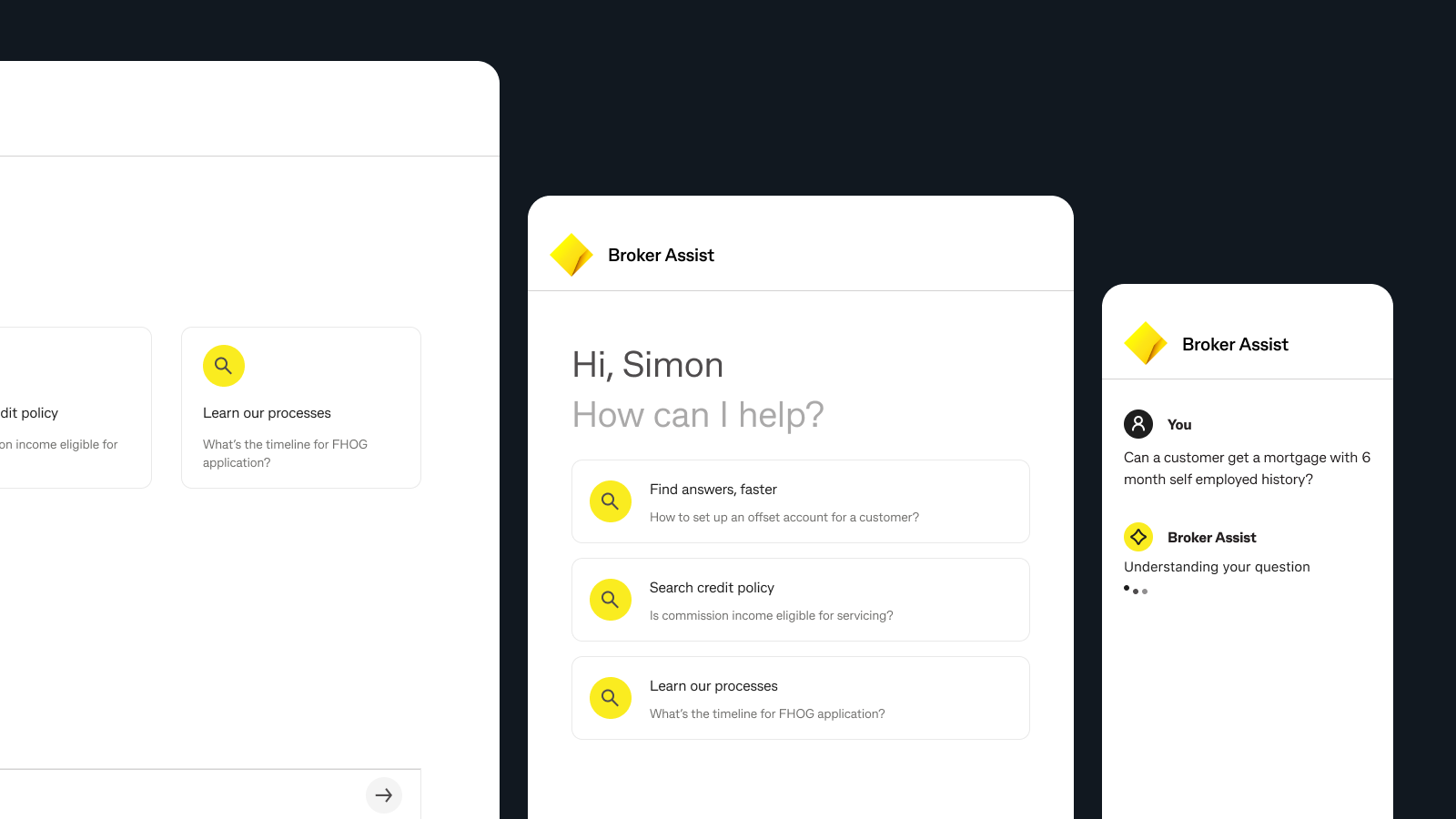

I designed Broker Assist as an integrated product surface inside CommBroker. The experience needed to answer scenario-based questions quickly, show where the answer came from, and make brokers more comfortable acting on the information they received.

The design intentionally constrained the answer space so the experience could stay dependable in a high-risk domain.

Keeping the assistant inside CommBroker reduced context switching and preserved broker workflow continuity.

Seeing the underlying policy source materially increased broker confidence in the AI output.

The north star was a broker who did not need to call support because the product answered the question well enough.

I don't need to call anyone for this.

Results

Brokers described the experience in practical terms: helpful, usable, instant. That tone mattered. In this context, measured adoption language was stronger than excitement. It suggested the assistant was becoming a reliable tool rather than a novelty. That mattered because the point of the pilot was not excitement. It was reducing routine interpretation calls while keeping broker confidence high.

Reflection

This project sharpened my view that good AI product design is mostly upstream work: clearer use cases, stronger source content, better trust mechanisms, and tighter success criteria. The model itself is only one part of the experience.

It also reinforced a principle that applies beyond AI. In regulated environments, credibility is not decorative. It is the product. If the user cannot trust the answer, the interface has failed no matter how elegant it looks.

Additional case study

How I designed shared-money behaviours inside the bank experience, not around it.

Read the next case study