Use case quality

If the problem is vague, the assistant will feel vague too. Good AI starts with a narrow, meaningful job.

Responsible AI

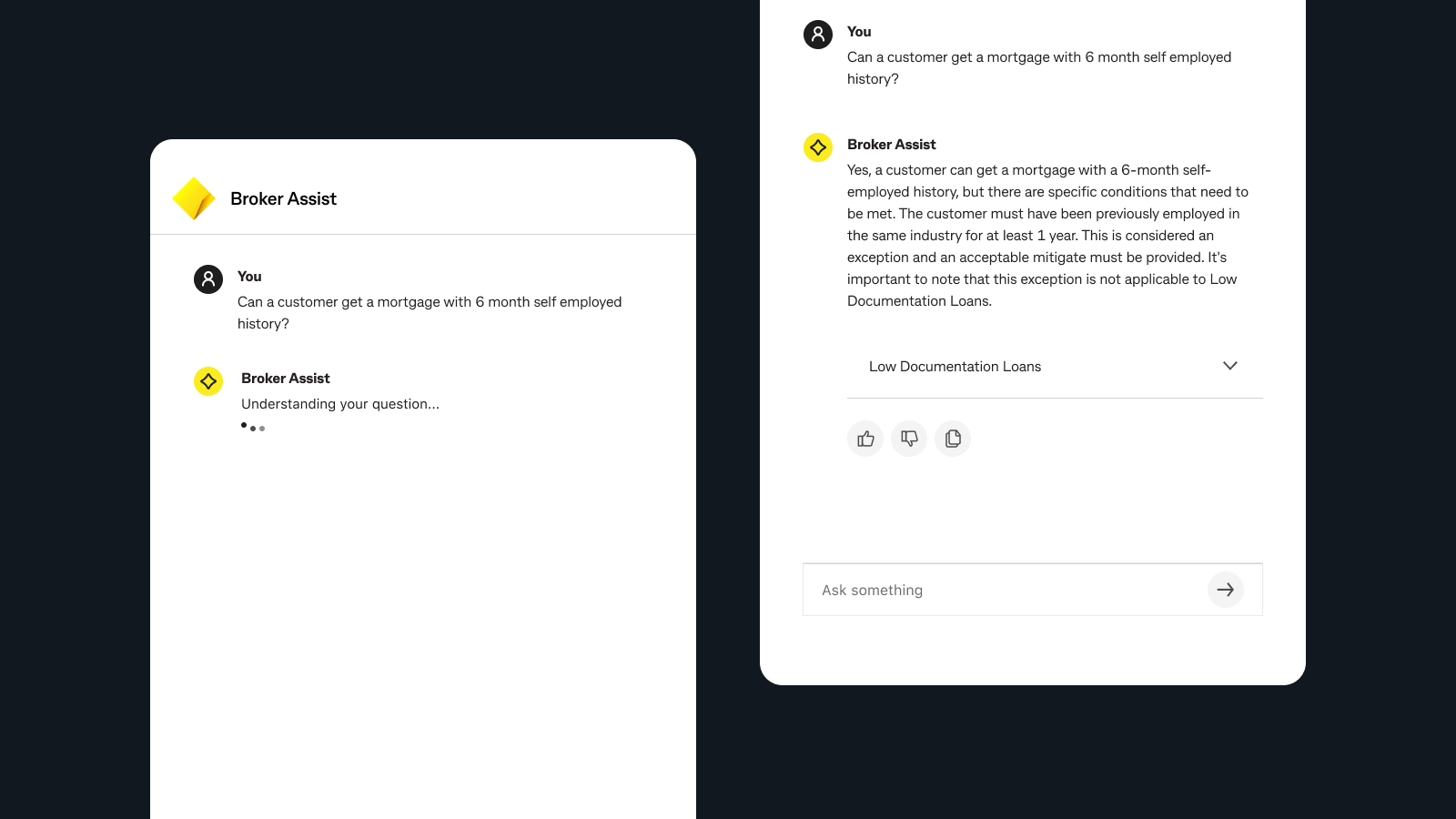

The visible answer is the least interesting part of many AI products. Quality is usually decided earlier: by the use case, the source content, the guardrails, and the criteria for when an answer should or should not be trusted.

Framing

AI projects often start too close to the technology. Teams identify a model capability, then look for a place to insert it. The result is usually shallow value: novelty, faster search, or conversational gloss over a problem that has not been framed precisely enough.

Inputs

If the problem is vague, the assistant will feel vague too. Good AI starts with a narrow, meaningful job.

Inconsistent or outdated content will produce inconsistent or outdated answers, no matter how polished the interface looks.

The decision to constrain the answer space is often a design decision, not just a technical one.

If success is defined as “engagement,” the product will often optimise for novelty over usefulness.

Trust

Attribution, source visibility, clear fallback behavior, and known limits do more for adoption than a clever tone of voice. A measured answer with visible provenance is often more usable than a beautifully phrased answer that feels ungrounded.

That is why I think of responsible AI as interface work as much as policy work. The product needs to communicate what it knows, where that knowledge comes from, and when the user should still seek human support.

Takeaway

When the upstream product work is strong, the interface feels confident without overselling itself. That kind of confidence is what makes an AI product useful in the long term, especially in the domains where being wrong carries real cost.

Back to portfolio

Return to the homepage to move between case studies and the broader positioning of the site.

Back to portfolio